Meta Tests Out New Generative AI Features with Small Group of Advertisers

Google’s doing it. Microsoft’s doing it. So it’s no surprise that Meta is getting into the generative AI game as well. On May 11 the parent company of Facebook, Instagram and WhatsApp unveiled several new generative AI applications for its platform that it is currently trialing with a small group of advertisers.

Also in line with other major tech players, Meta made it very clear that AI was nothing new for the company, highlighting the fact that it has been using artificial intelligence on its platform for years at a press event announcing the new AI tools. “The world businesses operate in today is very different than even a few years ago, with a number of rapidly evolving trends,” said John Hegeman, VP of Monetization at Meta at the May 11 event. “Over the past few years, a big part of how we’ve been responding to these changes is via investments in machine learning and artificial intelligence. For years, we’ve been using AI to help us rank and choose the most relevant ads to show each person, and over time, we’ve been using AI in more and more parts of our work across our products, such as to help us measure results more accurately for advertisers, or to automate more parts of the advertiser experience.”

What’s new now is the “generative” part of the equation. Meta has created an “AI Sandbox” where it is testing early versions of several new tools and features powered by generative AI. Only a small group of advertisers currently have access, but more advertisers will be invited to participate in July 2023, with access gradually expanding throughout the year.

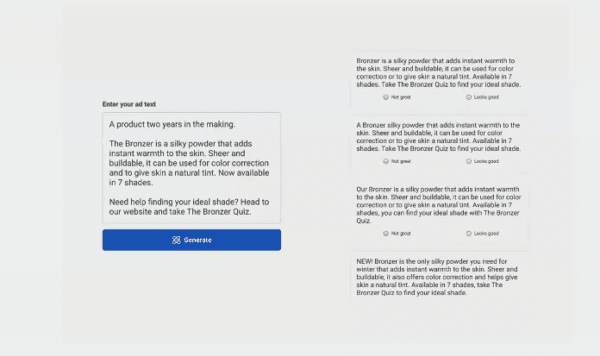

To start, Meta is testing three new capabilities in its AI Sandbox, including:

- Text variations with a tool that will generate multiple versions of advertising text, enabling advertisers to try out different messages for specific audiences;

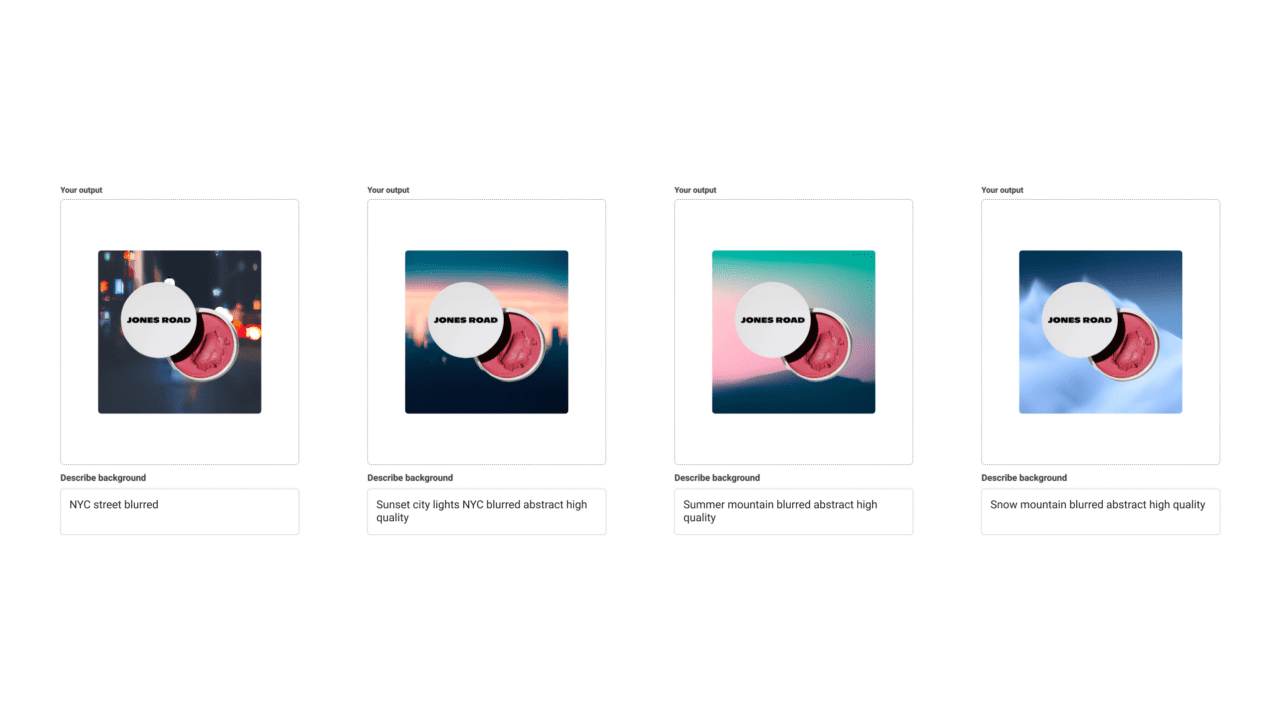

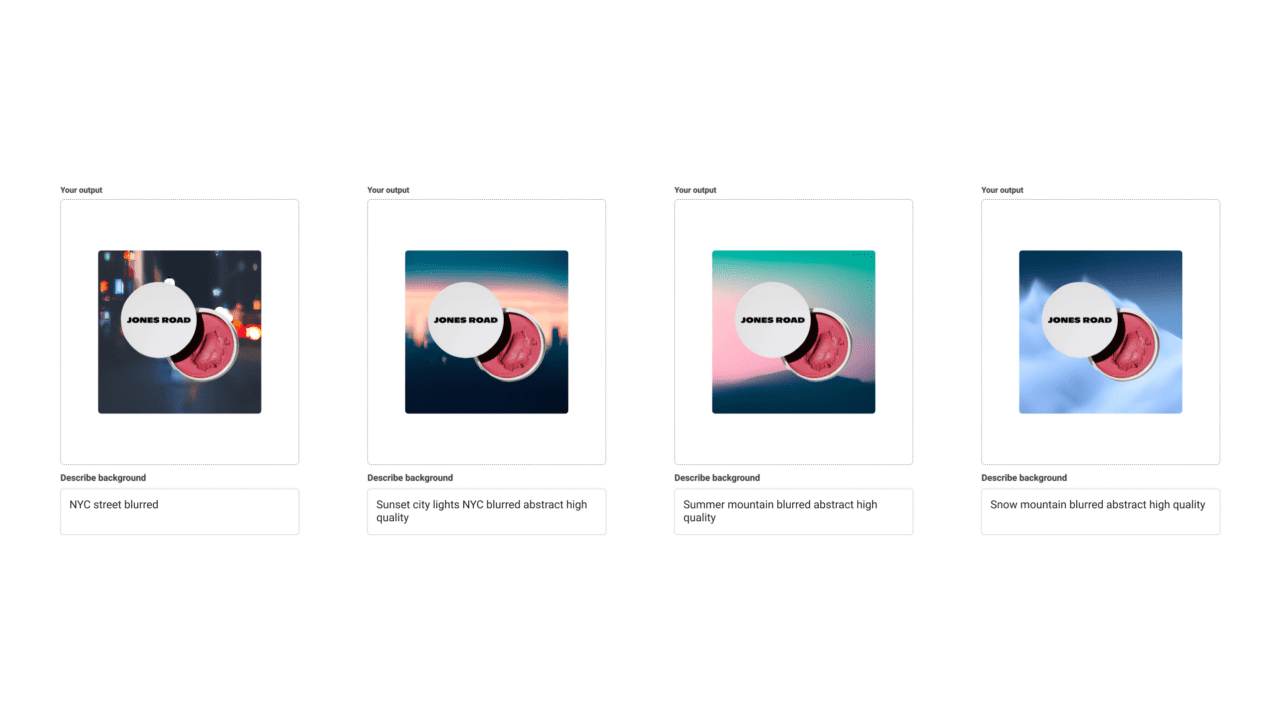

- Background generation, whichgenerates background images from text inputs, allowing advertisers to try various backgrounds more quickly to diversify their creative assets; and

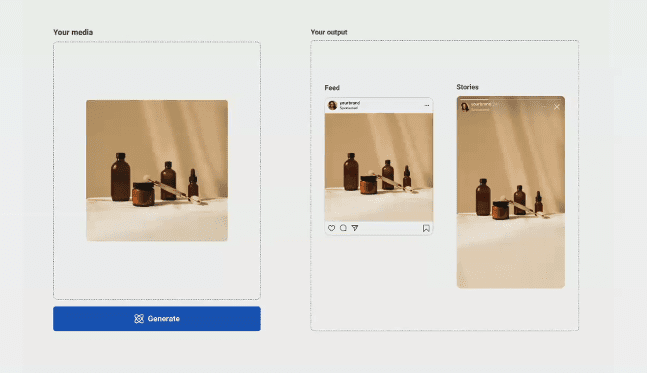

- Image outcropping, whichwilladjust creative assets to fit different aspect ratios across multiple surfaces like Stories or Reels, allowing advertisers to spend less time and resources on repurposing creative assets.

“We recognize that there are a number of [generative AI] tools that businesses are already using out there, and will continue to use,” said Hegeman. “What we see as our unique opportunity is to tightly integrate these features over time into our products, which will allow advertisers to do a number of powerful things to allow them to further personalize their messages for different parts of their audience. This whole space is developing rapidly, so we’re really focused on having this iterative approach of learning what’s working best as we figure out which types of new capabilities are going to be most helpful.”

Jones Road Among First Brands to Test AI Sandbox

While Meta executives declined to name the full set of advertisers currently testing AI Sandbox, one of them is Bobby Brown’s new DTC beauty brand Jones Road. Cody Plofker, CMO of Jones Road Beauty (and also Brown’s son), shared how those initial tests were going at the press event: “I love the idea of getting more copy variations and ideas. It’s not going to replace our team writing copy, but it’s great to get ideas and [will allow us to] test and create new variations a little bit quicker.

“One thing that I’m really excited about is the image enhancement, where you’re able to easily resize, because currently our team [has to create every asset] in multiple sizes, and that takes time,” Plofker added. “Being able to just upload one aspect ratio of creative, and have that work and look good across all placements, is something that would save us a ton of time.”

Meta has represented the bulk of Jones Road’s advertising budget from “day one,” Plofker shared. The brand also uses many of Meta’s “Advantage” AI- and machine learning-powered automated advertising tools, and Plofker is a big proponent of the potential for generative AI to further streamline marketing processes.

“We as marketers should be embracing [generative AI] and not be afraid of it,” he said. “We shouldn’t be thinking about AI potentially taking jobs, we should be thinking about how it can allow us to do our jobs better and to have more time to potentially focus on things that are more important. I’m excited about text-to-image based AI, like with the AI Sandbox, where we’re able to produce more assets or maybe just get ideas for assets that our team can create.”

This is just the beginning for Meta in the realm of generative AI, with Hegeman saying that the tools unveiled on May 11 are “certainly not all we’re exploring.” Another potential use case: the ability to use generative AI for chats in its business messaging platforms, which could allow business to quickly localize their messaging chats and automate customer exchanges.

“Our general approach here with our ad platform is that we want to help provide tools that improve performance for advertisers, because when advertisers see better performance, they’re going to choose to spend more on our platform,” said Hegeman.

But What About the Metaverse?

Meta famously rebranded back in 2021 to reflect a new focus on metaverse-related products, but since that time the general fervor for the metaverse has died down, as have Meta’s announcements in that arena. The company is still very much focused on this tech though, according to Nicola Mendelson, VP of the Global Business Group at Meta.

“There’s been a thing out there that we’re not interested in the metaverse anymore,” she said at the press event. “We are still really interested in the metaverse, but we’re also really clear that this whole thing is going to be five or 10 years before the vision of what we’re talking about [is realized]. If you want to build a world in [the metaverse], at the moment it’s quite funky to be able to do that, but in the future, utilizing things like generative AI and machine learning, you will be able to say, ‘I want to build a room,’ and there will be lots of tools to make that happen. That’s just one tiny example, but I think [there will be] so many different applications when we [reach that point].”

Hegeman also drove home the idea that Meta views investments in AI and metaverse technologies as complementary: “AI will help us develop the metaverse more effectively, and then the metaverse will be another great opportunity to create value for folks with AI,” he said.